CosmoMC Readme

June 2021. Check the web

page for the latest version.

Contents

Specific documentation

Introduction

CosmoMC is a Fortran 2008 Markov-Chain Monte-Carlo (MCMC) engine for exploring cosmological

parameter space, together with Fortran and python code for analysing Monte-Carlo samples and importance sampling (plus a suite of scripts for building grids of runs, plotting and presenting results).

The code does brute force (but accurate) theoretical matter power spectrum and Cl calculations with

CAMB. See the original paper

for an introduction and descriptions, and up-to-date sampling algorithm details. It can also be compiled as a generic sampler without using any cosmology codes.

On a multi-processor machine you can start to get good results in a few of hours. On single processors

you'll need to set aside rather longer. You can also run on a cluster.

You may also want to consider the new Cobaya sampler,

which is based on Python and also allows use of other samplers or cosmology codes.

By default CosmoMC uses a simple Metropolis algorithm or an optimized fast-slow sampling method (which works for likelihood with many fast nuisance parameters like Planck).

The program takes as inputs estimates of central values and posterior uncertainties of the various parameters. The proposal density can use information about parameter correlations from a supplied covariance matrix: use one if possible as it will significantly improve performance. Covariance matrices are supplied for common sets of default base parameters. If you compile and run with MPI (to run across nodes in a cluster), there is an option to dynamically

learn the proposal matrix from the covariance of the post-burn-in samples so far. The MPI option also allows you to terminate the computation automatically when a particular convergence criterion is matched. MPI is strongly recommended.

There are two programs supplied cosmomc and getdist. The first is a compiled Fortran code

does the actual Monte-Carlo and produces sets of .txt chain files and (optionally) .data output

files (the binary .data files include the theoretical CMB power spectra

etc.). The "cosmomc" program can also do post processing on .data files, for example doing importance

sampling with new data. The "getdist" program analyses the .txt files calculating statistics

and outputs files for the requested 1D, 2D and 3D plots (and could be used independently of the main cosmomc program), and there are Fortran and python versions.

The Python version is is the most up to date, and output is not the same as the fortran version (which is mainly provided for backwards checking and reproducing Planck results). GetDist is included with CosmoMC, but can be also run quite separately from the standalone python package. The "cosmomc" program also does post processing on .data files, for example doing importance

sampling with new data.

Please e-mail details of any bugs or enhancements to Antony Lewis. If you have any questions please ask in the CosmoCoffee computers and software forum. You can also read answers to other people's questions there.

Downloading and Compiling

To run compile and run CosmoMC you will need a Fortran 2008 compiler. Various options include

- Intel Fortran 14 or higher (earlier versions will not work). This is usually installed on supercomputers.

- The latest gfortran (part of GCC 6) compiled from source (currently released versions do not work).

- A pre-configured virtual development environment, available from CosmoBox.

This is good for consistent development and debugging, and ensures required libraries are also available. There are both desktop virtual machine (and Docker) images, and an Amazon AWS image that can be used for running clusters in the cloud.

If you just want to analyse chains and run plots, you only need python 2.7+ or 3.5+ (see plotting below).

You then need to download and install the program:

- Register for the download, and install by cloning from git using

git clone --recursive https://github.com/cmbant/CosmoMC.git

- Change to the CosmoMC directory and run "make"

To use Planck data you need to download and install the Planck likelihood code and data first. See the Planck readme.

The Makefiles used by "make" should detect ifort and gfortran, but if necessary edit the relevant top parts of the Makefile in the source directory depending on what system you are using.

- To output chains you might want to make a chains subdirectory, or (perhaps better) symlink to somewhere on your data disk.

Note that the latest version of CosmoMC is not the same as used for the Planck 2018 final analysis. See the github planck2018 branch for the exact CAMB and other settings used for that.

Some people have reported problems using Intel MPI 2019, earlier versions should be OK.

By default the build uses MPI. Using MPI simplifies running several chains and proposal optimization. MPI can be used with OpenMP for parallelization of each chain. Generally you want to use OpenMP to use all the shared-memory processors on each node of a cluster, and MPI to run multiple chains on different nodes (the program can also just be run on a single CPU).

For code editing some project files are available for standard development environments:

- A CodeBlocks project file is provided (cosmomc.cbp), which can also be used to run and debug from the cross-platform CodeBlocks IDE.

- On Windows using Visual Fortran, open the solution file in the VisualStudio folder.

Set test.ini (or your .ini file) as the program argument. This is not set up to compile with MPI, so mainly useful for development. Visual Fortran does not use the provided Makefiles, but works out dependencies automatically (and has much better debugging support than gdb with CodeBlocks).

See BibTex file for relevant citations.

Running and input parameters

See the supplied params.ini file for a fairly self-explanatory list of

input parameters and options. The file_root entry gives the root name for all files produced. Running using MPI on a cluster is recommended if possible as you can automatically handle convergence testing and stopping.

MPI runs on a cluster

When you run with MPI (compiled with -DMPI, the default), a number of chains will be run according to the number of processes assigned by MPI when you run the program. Output file names will have "_1","_2" appended to the root name automatically. You may be able to run over 4 nodes using e.g.

mpirun -np 4 ./cosmomc params.ini

There is also a supplied script python/runMPI.py that you may be able to adapt for submitting jobs to a job queue, e.g.

python python/runMPI.py params

to run using the params.ini parameters file. Run runMPI.py without arguments to see a list of options (number of chains, cores, wall time, etc).

You will probably need to set up a job script template file for your computer cluster (a couple of samples are supplied). See Configuring job settings for your machine. If you want to run batches of chains with different parameter or data combinations, see the help file on setting up parameter grids.

In the parameters file you can set MPI_Converge_Stop to a convergence criterion (see Convergence Diagnostics. Small numbers are better convergence; generally need R-1 < 0.1, but may want much smaller especially for importance sampling or accurate confidence limits. You can also directly impose an error limit on confidence values - see the parameter input file for details). If you set MPI_LearnPropose = T, the proposal density will be updated using the covariance matrix of the last half of all samples (across chains) so far.

Using MPI_LearnPropose will significantly improve performance if you are adding new parameters for which you don't have a pre-computed covariance matrix. However adjusting the proposal density is not strictly Markovian, though asymptotically it is as the covariance will converge. The burn in period should therefore be taken to be larger when learning the proposal density to allow for the time it takes the covariance matrix to converge sufficiently (though in practice it probably doesn't matter much in most cases). Note also that as the proposal density changes the number of steps between independent samples is not constant (i.e. the correlation length should decrease along the chain until the covariance has converged). The stopping criterion uses the last half of the samples in each chain, minus a (shortish) initial burn in period. If you don't have a starting covariance matrix a fairly safe thing to do is set ignore_rows=0.3 in the GetDist parameter file to skip the first chunk of each chain.

You can also set the MPI_R_StopProposeUpdate parameter to stop updating the proposal density after it has converged to R-1 smaller than a specified number. After this point the chain will be strictly Markovian. The number of burn in rows can be calculated by looking at the params.log file for the number of output lines at which R-1 has reached the required value.

If things go wrong check the .log and any error files in your cosmomc/scripts directory.

Input Parameters

The input .ini files contain sets of name-value pairs, in the form key = value.

Keys setting input values for specific parameters can be set using numbered key values, param[omegabh2]=xxx yy... for omegabh2. The names of the various parameters are set in .paramnames files: for cosmology parameters see params_CMB.paramnames. Likelihoods may also have nuisance parameters that need to be varied: see the .paramnames files in the ./data/ directory.

.ini files can include other ini files using DEFAULT(xx.ini) or INCLUDE(yy.ini), so there's no need to repeat lots of settings when you do different similar runs. The sample test.ini file includes .ini files in the batch2/ directory, set up for Planck runs. The action=4 setting just calculates test likelihoods for one model (the central value of your parameter ranges) and writes out the result. Set action=0 to run a test chain. Using DEFAULT in a .ini files means you can include default settings, but override ones of interest for a particular run.

- Parameter limits and proposal density

The parameter limits, estimated distribution widths and starting points are listed

as the param[paramname] variables for each parameter, of the form

param[paramname] = center min max start_width propose_width

The start_width entry determines the randomly chosen dispersion of the starting position about the given centre, and should be as large or larger than the posterior (for the convergence diagnostic to be reliable, chains should start at widely separated points). Chains are restricted to stay within the bounds determined by min and max. If the parameter is not to be varied in your run, you can just specify the fixed value.

The sampler proposes changes in parameters using a proposal density function with a width determined by propose_width (multiplied by the value of the global propose_scale parameter). The propose_width should be of the order of the conditional posterior width (i.e. the expected error in that parameter when other parameters are fixed). If you specify a propose_matrix

(approximate covariance matrix for the parameters), the

parameter distribution widths are determined from its eigenvalues instead, and

the proposal density changes the parameter eigenvectors. The covariance

matrix can be computed using "getdist" once you have done one run - the file_root.covmat file.

The planck_covmats/ directory is supplied with many you can use. The covariance matrix does not have to include all the parameters that are used - zero entries will be updated from the input propose widths of new parameters (the propose width should be of the size of the conditional distribution of the parameter - typically quite a bit smaller than the marginalized posterior width; generally too small is better than too large). The scale of the proposal density relative to the covariance is given by the propose_scale parameter. If your propose_matrix is significantly broader than the expected posterior, this number can be decreased.

If you don't have a propose_matrix, you can use estimate_propose_matrix = T to automatically estimate it by numerical fitting about the best fit point (with action=2 to stop, or action=0 to continue straight into an MCMC run).

- Sampling method

Set sampling_method=1 to use a vanilla Metropolis algorithm (in the optimal fast/slow subspaces if use_fast_slow is true).

Set sampling_method=7 to use a fast-slow dragging algorithm (Neal 2005), which allows fast nuisance parameters to be handled efficiently; this is the default for Planck runs.

Other sampling methods that were implemented in previous version have not currently been updated for the latest version.

- CAMB's numerical accuracy

The default accuracy is aimed at WMAP. Set high_accuracy_default = T to target ~0.1% accuracy on the CMB power spectra from CAMB. This is sufficient for numerical biases to be small compared to the error bar for Planck; for an accuracy study see arXiv:1201.3654. You can also use the accuracy_level parameter to increase accuracy further, however this is numerically inefficient and is best used mainly for numerical stability checks using importance sampling (action=1).

- Best-fit point

Set action =2 to just calculate the best-fit point and stop. This uses

Powell's 2009 BOBYQA bounded minimization routine, which works from two parameters up to hundreds of parameters, but does require that the likelihood return a valid number at all points between the bounds given on the base parameters in the input .ini file. The algorithm starts at the point given by the center parameter values given in the .ini file, and iterates until the parameter values are converged to within a radius of max_like_radius (in normalized units, e.g. fraction of a standard deviation or propose_width). The minimization will also stop if the log likelihood has converged to within minimize_loglike_tolerance.

The best-fit values (and derived and nuisance parameters) are output to a file called file_root.minimum. The method works for parameters with hard bounds that truncate the posterior, e.g. for neutrino mass mnu>0.

Minimization with action=2 will work more reliably if you have a propose_matrix to approximately orthogonalize the likelihood. You can also run minimizations from multiple random starting points to check that they converge: run with e.g. "mpirun -np 4" and minimize_random_start_pos=T to check four minimizations - the final output will be the point with the best fit of all and a warning will be printed if there there are outliers.

- Data file output

Since consecutive points are correlated and the output files can get quite large, you may want to thin the chains automatically: set the indep_sample parameter to determine at what frequency full information is dumped to a binary .data file (which includes the Cls, matter power spectrum, parameters, etc). If zero no .data file is generated. You only need to keep nearly uncorrelated samples for later importance sampling. You can specify a burn_in, though you may prefer to set this

to zero and remove entries when you come to process the output samples.

- Threads and run-time adjustment

The num_threads parameter will determine the number of openMP threads (in MPI runs, usually set to the number of CPUs on each node). Scaling is linear up to about 8 processors on most systems, then

falls off slowly. It is probably best to run several chains on 8 or fewer

processors. You can terminate a run before it finishes by creating a file

called file_root_NN.read containing "exit =1" - it will read it in, delete the .read file and stop.

The .read file can also have "num_threads =xx" to change the number of

threads (per chain) dynamically. If you have multiple chains you can create file_root.read which will be read by all the chains. In this case the .read file is not deleted automatically.

- Post-processing (e.g. importance sampling)

The action variable determines what to do. Use action=1 to process

a .data file produced by a previous MCMC run - this is used for importance

sampling with new data, correcting results generated by approximate means,

or re-calculating the theoretical predictions in more detail. If action=1

set the redo_xxx parameters to determine what to do. With redo_add=F you should include all the data you want used for the final result (e.g. if you generated original samples with CMB data, also include CMB data when you importance sample - it will not be weighted twice). With redo_add=T you should include only data you want to add - the new likelihoods are added to those already stored in the chains. PostProcessing is fastest if you have binary .data files from an original run, however if you do not have these you can use redo_from_text=T to read in chains from .txt files.

- Exploring tails and high-significant limits

The temperature setting allows you to sample from P^(1/T) rather

than P - this is good for exploring the tails of distributions, discovering

other local minima, and for getting more robust high-confidence error bars. See also the different sampling_methods.

- Checkpointing

Set checkpoint = T in the .ini file to checkpoint: i.e. generate files so that if the processes are terminated they can be restarted again from close to where they left off. With MPI this setting creates file_root.chk files used to store the current status. To continue a prematurely terminated run, just run again using exactly the same commands and files as originally. Note that if you use checkpointing, but you want to overwrite existing chains of the same name, you must manually delete all the file_root.* files (otherwise it will attempt to continue from where they left off). Please note this feature is not fantastically well tested, please let us know of any problems. Also note that for checkpointing to work the first run must run for at least past the initial burn phase (you can see when the file_root.chk files have been produced), otherwise the run will just start from scratch again.

Output files

- The program produces file_root_1.txt, file_root_2.txt etc, files listing each accepted set of

parameters for each chain; the first column gives the number of iterations staying at

that parameter set (more generally, the sample weight), and the second the likelihood.

- A file_root.paramnames file lists the names and labels of the parameters corresponding to the columns 3+ of the output chain files. By default this is just a copy of the params_CMB.paramnames file read in by the default parameterization specified in params_CMB.f90. Parameter names are strings of numbers and letters without spaces, used reference parameters in input files; the labels are used when making plot axes labels and various output files.

- A file_root.ranges lists the name tags of the parameters, and their upper and lower bounds (including parameters that are not varied, where upper and lower are equal to the fixed value)

- Files file_root_1.dat, file_root_2.dat, etc., if indep_sample is non-zero. These contain full computed

model information at the independent sample points (power spectra etc). These can be used for quick importance sampling using action=1.

- A file_root.log file

contains some info which may be useful to assess performance.

- For post-processing (action=1), the program reads in an existing .data file and processes

according to the redo_xxx parameters. At this point the acceptance multiplicities

are non-integer, and the output is already thinned by whatever the original

indep_sample

parameter was. The post-processed file are output to files with root redo_outroot.

Configuring job settings for your machine

The runMPI.py and runbatch.py submission scripts use a job template file to submit jobs to the queue on your cluster.

The job_script files is supplied as sample, and you may need to make your own template specific to the configuration of your machine, depending on what queuing system you have (e.g. PBS, SLURM, etc.)

Recommended steps are

If necessary you can also edit the submitJob function in python/paramgrid/jobqueue.py, but most things can be configured via the template and .bashrc settings.

You can change the number of chains, nodes, walltime etc when submitting a job by using optional parameters to runMPI.py and runbatch.py: see -h help for available options.

Analysing samples and plotting

There are now several ways you can analyse chain results:

- Using the python/GetDist.py program (which can be run without having a fortran compiler installed).

- With the GetDist Gui interactive program, in python/GetDistGUI.py. See the GetDist GUI readme.

- Programmatically, using the python modules via python scripts.

- Using the getdist program, compiled with Fortran (used by Planck 2015 chains, but now deprecated)

The GetDist programs analyses text chain files produced by the cosmomc program. These are a group of plain text files of the form

xxx_1.txt

xxx_2.txt

...

xxx.paramnames

xxx.ranges

where "xxx" is some root filename, the .txt files are separate chain files, xxx.paramnames gives the names (and optionally latex labels) for each parameter, and xxx.ranges optionally specified bounds for each (named) parameter. The chain .txt files are in the format

weight like param1 param2 param3 ...

The weight gives the number of samples (or importance weight)

with these parameters. like gives -log(likelihood), and param1, param2... are the values of the parameters at each point.

The .paramnames file lists the names of the parameters, one per line, optionally followed by a latex label.

Names cannot include spaces, and if they end in "*" they are interpreted as derived (rather than MCMC) parameters, e.g.

x1 x_1

y1 y_1

x2 x_2

xy* x_1+y_1

The .ranges file gives hard bounds for the parameters, e.g.

x1 -5 5

x2 0 N

Note that not all parameters need to be specified, and "N" can be used to denote that a particular upper or lower limit is unbounded. The ranges are used to determine

densities and plot bounds if there are samples near the boundary; if there are no samples anywhere near the boundary the ranges have no affect on plot bounds, which are chosen appropriately for the range of the samples.

The GetDist program, scripts and gui could be used completely independently of the CosmoMC program if you produce chains (or samples) in the same format

(see the pip-installable python package). If there is just one file of samples, you can also use xxx.txt without the _n number tag that's used to denote separate chains.

Running the GetDist program

The GetDist program processes the chains and produces a set of output files giving marginalized constraints, convergence diagnostics, etc, and optionally some scripts for producing plots. This is convenient for bulk production of parameter constraints and first-look plotting; use the python modules in your own scripts if you need more fine-grained control.

Running using the python version

python python/GetDist.py [distparams.ini] [chains/chainroot]

or using compiled Fortran:

./getdist distparams.ini [chains/chainroot]

Here the parameter input file distparams.ini determines analysis parameters; in the python version this is optional, and if omitted defaults will be used. The chain root name to analyse can be given in the .ini file, or you can specify it as an optional parameter on the command line to quickly analyse different chains with the same settings. The parameter input file should be fairly self-explanatory,

and is in the same format as the cosmomc parameter input file. To make a new .ini file you can edit to customize settings use

python python/GetDist.py --make_param_file myparams.ini

Note that the outputs from the python and fortran versions of GetDist are not identical due to slightly different algorithms, but should usually be consistent to better than the sampling noise for parameter means and limits. Both can produce pre-computed densities for plotting (in the plot_data_dir directory), but the python plotting scripts do not require this - output will be faster and make far fewer files if plot_data_dir is not set.

GetDist Parameters

- GetDist processes the file_root.txt file, and outputs statistics, marginalized

plots, samples plots, and performs PCA (principle component analysis).

- Set ignore_rows to a positive integer to ignore that number of output rows as burn-in, or to a fraction <1 to skip that fraction of each chain's rows.

- Set the parameter_names parameter to specify a .paramnames file with the names and labels for parameters corresponding to the various columns in the chain files (see the supplied examples). If this parameter is empty getdist reads the parameters from the file_root.paramnames file that is output when generating the chains.

-

If you have a derived parameter which has a prior which cuts off when the posterior is non-negligible

you need to either set the limits in a xxx.derived_ranges file analogous to the xxx.paramnames file being when running the chains, or

set the limits[paramname] variables to the corresponding limits when running GetDist. Otherwise

limits are computed automatically. DO NOT use limits[paramname] to change

the bounds on other plots - the program uses the limits information when

it is estimating the posteriors from the samples. Limits for base MCMC and standard derived parameters are set automatically from the .ranges file produced with the chain.

If only one end is fixed you can use N the floating end, e.g. "limitsxx = 0 N" for the tensor amplitude which has a greater than zero prior.

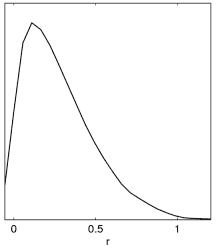

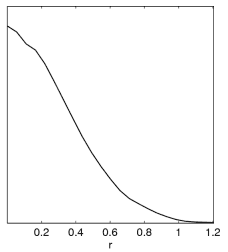

Example: Since often people get this wrong with new parameters, here is an illustration of what happens when generating plots from a tensor run set of chains (with prior r>0):

Incorrect result when no chain.ranges file and limits[r02] is not set. |

Correct result when setting limits[r02]=0 N (or using default .ranges file, which includes the equivalent prior information from paramnames/derived_tensors.derived_ranges). |

- Various parameters determine how sample densities are computed (see the Kernel Density Estimation notes). The smooth_scale_1D and smooth_scale_2D parameters can be set to change the smoothing scale.

For smooth_scale_1D:

- if smooth_scale_1D >= 1 smooth by smooth_scale_1D bin widths

- if smooth_scale_1D> 0 and <1 smooth by Gaussian of smooth_scale_1D standard deviations in each parameter (around 0.2-0.3 is often good); this gets flat distributions nice and smooth without overly broadening well determined parameters

- if smooth_scale_1D< 0 uses automatic smoothing length (changes with parameter)-the overall smoothing length is scaled by abs(smooth_scale_1D) from very crude guess at best overall scale

The behaviour for smooth_scale_2D is similar. Usually the default =-1 setting can be used, which choose smoothing automatically, but changing it can be a good way to enforce a smaller smoothing scale (for example if you have too few samples, the auto-scaling will make the smoothing relatively large, which can sometimes make the results somewhat artificially better than they really are).

The .ini file comments should explain the other options.

Output Text Files

These are all output by the GetDist program, and most can also be viewed on demand by using the menu options in the GetDist GUI.

- file_root.margestats file contains the means, standard deviations and marginalized limits for the different parameters (including derived parameters). Limits to calculate are set using the contourxx input parameters.

- file_root.likestats gives the best fit sample model, its likelihood, and limits from the extremal values of the N-dimensional distribution. Note that MCMC does not generally provide accurate values for the best-fit.

- file_root.converge contains various convergence diagnostics

- file_root.PCA contains a principle component analysis (PCA) for PCA_num parameters, specified in PCA_params parameter. You can specify the mapping

used with PCA_func, for example 'L' to use logs (what you usually

want for positive definite parameters). The output file contains

the analysis including the correlation matrix, eigenvectors and eigenvalues,

as well as the well constrained parameter combinations and their marginalized

errors. This is how you automatically work out constraints of the form

param1^a param2^b param3^c ... = x \pm y.

- file_root.corr contains parameter correlations

- file_root.covmat contains a covariance matrix you can use as a proposal matrix for generating future chains

Plotting

GetDist can be used to produce basic simple scripts for various types of plots. You can also just write plotting scripts, or use the GetDist GUI, in which case there is no need to run GetDist first.

- Interactive plotting

See the GetDist GUI readme for details of hope to use the GetDist GUI to make plots from chains. Even if you ultimately write custom scripts to optimize how things are displayed, this can be a quick and easy way to compare results and see what you want to plot.

- Python plot scripts

See the CosmoMC python readme for details of how to use the plotting library.

If no_plots=F, GetDist produces scripts files to make simple 1D, 2D and 3D plots. The script files produced are called

- file_root for 1D marginalized plots

- file_root_2D for 2D marginalized plots

- file_root_3D to make coloured 2D samples plots

- file_root_tri to make 'triangle' plots (example. Set triangle_plot=T in the .ini file)

Parameter labels are set in distparams.ini - if any are blank the parameter is ignored. You can also specify which parameters to plot, or if parameters are not specified for

the 2D plots or the colour of the 3D plots getdist automatically works out

the most correlated variables and uses them. The data files used by auto-generated plotting scripts are output to the plot_data directory.

Convergence diagnostics

The getdist program will output convergence diagnostics, both short summary information when getdist is run, and also more detailed information in the file_root.converge file. When running with MPI the first two of the parameters below can also be calculated when running the chains for comparison with a stopping criterion (see the .ini input file).

- For multiple chains the code computes the Gelman and Rubin "variance of chain means"/"mean of chain variances" R statistic for each parameter using the second half of each chain (reference). The .converge file produced by getdist contains the numbers for each parameter individually. The program also writes out the value for the worst eigenvalue of the covariance of the means, which should be a worst case (catching poor chain coverage in directions not aligned with the base parameters). This "R-1" statistic is also used for the stopping criterion when generating chains with MPI.

If the numbers are much less than one then the second half of each chain probably provides an accurate estimate of the means and variances. If the distribution is bimodal and no chains find the second mode low values can be misleading. Typically you want the value to be less than 0.1, or lower if you want means to high accuracy or to make nice 2D density plots (0.01 is often fine). Low values do not guarantee that small percentiles will be measured accurately (though it certainly helps), or that the value won't increase as you run longer and probe further into the tails.

-

For MPI runs, in addition to specifying a stopping criterion as above, you can also give a convergence criterion for a confidence limit. In general it is much harder to get an accurate value for a small confidence value than for the mean, so imposing a tight limit may make your chains run for a very long time (though you can then be pretty confident in your confidence). The value computed is the variance of the chain percentiles from the last half of the different chains, in units of the standard deviation of each parameter. You can either specify a particular parameter to check, or do all of them. Any parameter with a slowly explored tail will only converge very slowly, in which case you may be able to improve things by generating chains at a higher temperature (so the tails are explored more readily; GetDist can adjust for the temperature automatically), or re-parameterizing.

- The auto-correlation length gives a measure of how independent the samples are, with the total number of samples divided by the length giving an estimate of an effective number of independent samples.

Two auto-correlation lengths are computed, in 'weight' units and 'sample' units. The latter is in units of the number of rows in the chain file, the former is the "weight mass' that determines the correlation length; for definitions see the GetDist notes.

-

For individual chains (before importance sampling) getdist computes the Raftery and Lewis convergence diagnostics. This uses a binary chain derived from each parameter depending on whether the parameter is above or below a given percentile of its distribution (specified by the converge_test_limit input parameter). The code works out how much the binary chain needs to be thinned to approximate a Markov process better than a second order process, and then uses analytical results for the convergence of binary Markov chains to assess the burn in period. It also assesses the thin factor needed for the binary chain to approximate an independence chain, which gives an idea of how much you need to thin the chain to obtain independent samples (i.e. how much you can get away with thinning it for importance sampling, though thinning isn't entirely lossless). The .converge file contains the numbers for each chain used, getdist writes out the worst case. See this reference for details.

-

A simpler way to assess the error on quantile limits is by computing them using subsets of the samples. GetDist produces the file_root.converge contains the 'split-test' rms change on the parameter quantiles in units of the standard deviation when the samples are split into 2,3 or 4 parts. Small numbers are clearly better. A number of 0.5 for a 2-way split indicates that the 95% confidence limit may in fact be off by the order of half a standard deviation (though probably better, as the limit is computed using all the samples). Large values in a particular parameter may indicate that there is a long tail that is only being explored slowly (in which case generating the chain at a higher temperature or changing parameters to the log might help).

Differences between GetDist and MPI run-time statistics

GetDist will cut out ignore_rows from the beginning of each chain, then compute the R statistic using all of the remaining samples. The MPI run-time statistic uses the last half of all of the samples. In addition, GetDist will use all the parameters, including derived parameters. If a derived parameter has poor convergence this may show up when running GetDist but not when running the chain (however the eigenvalues of covariance of means is computed using only base parameters). The run-time values also use thinned samples (by default every one in ten), whereas GetDist will use all of them. GetDist will allow you to use only subsets of the chains.

Parameterizations

Performance of the MCMC can be improved by using parameters which have a close to Gaussian posterior distribution. The default parameters (which get implicit flat priors) are

- omegabh2 - the physical baryon density

- omegach2 - the physical dark matter density

- theta - 100*(the ratio of the [approx] sound horizon to the angular diameter distance)

- tau - the reionization optical depth

- omegak - omega_K

- mnu - the sum of the neutrino masses (in eV)

- nnu - the effective density parameter for neutrinos Neff

- w - the (assumed constant) equation of state of the dark energy (taken to be quintessence)

- ns - the scale spectral index

- nt - the tensor spectral index

- nrun - the running of the scalar spectral index

- logA - ln[10^10 A_s] where A_s is the primordial superhorizon power in the curvature perturbation on 0.05Mpc^{-1} scales (i.e. in this is an amplitude parameter)

- r - the ratio A_t/A_s, where A_t is the primordial power in the transverse traceless part of the metric tensor

Parameters like H0 and omegal (ΩΛ) are derived from the above. Using theta rather than H0 is more efficient as it is much less correlated with other parameters. There is an implicit prior 40 < H0 < 100 (which can be changed).

The .txt chain files list derived parameters after the base parameters.

The list of parameter names and labels used in the default parameterization is listed in the supplied params_CMB.paramnames file.

Since the program uses a covariance matrix for the parameters, it knows about (or will learn about) linear combination degeneracies. In particular ln[10^10 A_s] - 2*tau is well constrained, since exp(-2tau)A_s determines the overall amplitude of the observed CMB anisotropy (thus the above parameterization explores the tau-A degeneracy efficiently). The supplied covariance matrix will do this even if you add new parameters.

Changing parameters does in principle change the results as each base parameter has a flat prior. However for well constrained parameters this effect is very small. In particular using theta rather than H_0 has a small effect on marginalized results.

The above parameterization does make use of some knowledge about the physics, in particular the (approximate) formula for the sound horizon.

To change parameterization make a new .paramnames file, then change sources/params_CMB.f90 to change the mapping of physical parameters to MCMC array indices, and also to read in your new .paramnames file.

Likelihoods that you use may also have their own nuisance parameters.

Using CosmoMC as a generic sampler

You can use CosmoMC to sample any custom function that you want to provide. To do this

- Write your own likelihood in GenericLikelihoodFunction in calclike.f90

- Adapt params_generic.ini appropriately for your parameter numbers and settings (see comments there)

- Run using your .ini file as normal

You can use named or un-named parameters. Making your own .paramnames file to name your parameters usually makes things easier (except possibly if you have a very large number of parameters where number labels make sense).

Programming

The code makes use of Fortran 2003 features and structures. There is automated code documentation, which includes modules, classes and subroutines. File and class documentation gives inheritance trees to help understand the structure, for example GeneralTypes.f90 contains the key base classes that other things are inherited from.

You are encouraged to examine what the code is doing and consider carefully

changes you may wish to make. For example, the results can depend on the

parameterization. You may also want to use different CAMB modules, e.g.

slow-roll parameters for inflation, or use a fast approximator. The main

source code files you may want to modify are

-

settings.f90

This defines the maximum number of parameters and their types.

-

Calculator_Cosmology.f90 and Calculator_CAMB.f90

Routines for generating Cls, matter power spectra and sigma8 from CAMB.

Override the calcualtor class in Calculator_Cosmology.f90 to implement cosmology using other calculatorse.g. a fast approximator like PICO,

or other Boltzmann code.

etc.

- DataLikelihoods.f90

This is where you can add in new likelihood functions

- driver.F90

Main program that reads in parameters and calls MCMC or post-processing.

- propose.f90

This is the proposal density and related constants and subroutines. The efficiency

of MCMC is quite dependent on the proposal. Fast+slow and fast parameter subspaces are proposed separately. See the paper for a discussion of the proposal density and use of fast and slow parameters.

Add-ons and extra datasets

Various extended data sets require some code modifications. After testing these may be included as part of the public program, but meanwhile can be

installed manually or obtained from the cosmomc git repository (access available on request).

Version History

- June 2021

- May 2020

- July 2019

- Added Planck 2018 likelihoods and associated files.

- Minor updated Pantheon (batch3/Pantheon18.ini)

- batch3/HST_Riess19.ini for latest H0

- Add DR14 Lyman-alpha BAO (thanks Pablo-Lemos)

- Bug fixes and misc compatibility improvements

- Updated to latest GetDist

- GetDist GUI supports Python 3 with PySide2

- Make Planck2018 branch for fixed version consistent with that used for release (uses CAMB July 2018)

- Updated to CAMB version 1.0.7. (note that this changes likelihood values slightly compared to Planck2018 branch; also HMcode

version changes for numerical stability and speed)

- October 2018

- Added DES 1 Yr data (batch3/DES.ini and variants, likelihood is implemented natively in wl.f90)

- Added BICEP-Keck 2015 likelihood and data (batch3/BK15.ini)

- Misc updates to CAMB and getdist (see github history)

- Fix bug settings nnu<3.046 and degenerate neutrino masses

- Fixed gfortran issue with checkpointing

July 2018

- Added Planck 2018 lensing likelihoods (batch3/lensing.ini and batch3/lensonly.ini)

- Added Riess et al. SH0ES H_0 constraints in (in batch3/)

- New wl.f90 for galaxy lensing and cross-correlations (actual DES likelihood files not provided until published)

- batch3/ .ini files settings for Planck 2018 runs (excluding 2018 CMB likelihoods which are not released yet)

- Added element abundance likelihoods and new BBN interpolation grids for Parthenope and PRIMAT codes

- Added SPT-SZ 2500d TT (thanks Christian Reichardt)

- CMBlikes now supports aberration correction parameter (thanks Christian Reichardt)

- Added BAO consensus: batch3/DR12_BAO_consensus.ini and batch3/DR12_FS_consensus.ini. BAO module updated to support DM measurements, measurement files without error columns, reading either covariance or inverse covariance, support for multiple specified zeff.

- Python grids now have getdist_options dictionary to specify grid-level getdist parameters (so now no batch3 equivalent of batch2/getdist_common.ini)

- Parameter grids support python filters functions that act over all chains (e.g. to import zre<6.5 prior) and partial support for recursive importance sampling (only filtering)

- Built-in support for power-law extrapolation of matter power P(k) beyond kmax to extrap_kmax (700h/Mpc by default)

- added "astro" parameterization using flat prior in H0, omegam, omegab, logA

- Fixed output of first sample when importance sampling with redo_skip

- Importance sampled chains with redo_skip now produce .properties.ini to flag chain as burn-removed

- CMBlikes optimizations and fixes. BKP nuisance parameters now used as fast parameters.

- GetDist changes:

- Improvements to triangle_plot for the upper triangle

- get_axes_for_params() function for easier post-manipulation of axes

- plots now support MixtureND instances for plotting Gaussian mixture models directly (or just GaussianND, Gaussian2D)

- plots add_2D_covariance function to directly plot contours from given mean and covariance

- add_2D_mixture_projection function to plot specific 2D marginalized contours for MixtureND Gaussian mixture

- settings parameters: auto_ticks whether to use matplotlib 2 'auto' tick numbering (does not work well for grids of plots, default: False);

- thin_long_subplot_ticks, whether to try to prevent x-axis label overlap when long numbers in tick labels (default: True)

- samples getParamSampleDict() function to get dictionary of parameter values for given sample number

- samples saveChainsAsText() function to re-save set of chain files (with ranges and names)

- gaussian_mixtures classes now have paramNames, and getUpper/getLower functions for easier integration with plotting scripts; GaussianND convenience special-case class.

- paramNames can now be instantiated with list of labels (rather than having to call separate function)

- ParamBounds fixedValue and fixedValueDict functions to get fixed parameter values

- alpha_scatter option to _3d plots, to plot all samples alphaed by their weights

- Better support for reading nested-sampling chains: now ignores all samples with weight less than min_weight_ratio=1e-30 of the maximum weight

- MCSamples.addDerived() function now supports specification of parameter range

- Various misc fixes

November 2016

- Added .derived_ranges files (in /paramnames folder) to specify hard priors on derived parameters. These are now propagated to output chain .ranges file along with H0 and z_rei priors (and hence limits do not need to be manually specified when running GetDist).

- Added neutrino_hierarchy (={normal, inverted, degenerate}), to support one or two distinct eigenstate approximation to oscillation constraints.

- python/runGridGetDist.py script now uses python getdist. Batch3-based grids no longer use getdist_common.ini, but default getdist settings (+ what's set in the grid setting file).

- Bicep BK14 data files and CMB_BK_Planck update (thanks Colin Bischoff)

- Support for BAO .dataset files with no measurement_type keyword (set in data file for each point instead)

- Updated getdist to 0.2.6 (fixing compatibility issue with latest matplotlib for 2D contours)

- Update CAMB to Nov 2016 version

June 2016

- CAMB updated to May 2016 version (including HMCode Halofit support)

- Added DR12 BAO (in batch3/, thanks Tom Charnock)

- Added batch3/HST_Riess2016.ini

- Added include_fixed_parameter_priors input option (default false, as before)

- Latest GetDist version and small fixes

July 2015

- Data Updates:

- Settings (batch2/) for the Planck 2015 likelihood release. Planck lensing and BKP likelihoods are native and included with cosmomc, others can be linked to the Planck likelihood code.

- Added SZ likelihood, szcounts.f90, as used by the Planck 2015 cluster paper

- BKP likelihood update to include tests including E; small fix to covariance matrix (does not significantly change results)

- action=4 test run has option to check likelihoods gives expected values (as test_planck.ini)

- New GetDist:

- Major rewrite of Python GetDist (python/GetDist.py), which now supersedes the Fortran version

- Kernel density estimates in 1D and 2D now use optimized kernel bandwidth selection, linear boundary correction and multiplicative bias correction;

for details and references see the GetDist Notes. Generally much more accurate, and also works with multi-modal distributions.

- Convergence stats include correlation length in both sample and weight units (generalization so applicable to importance-sampled chains; see the GetDist Notes)

- GetDist GUI (now python/GetDistGUI.py) can show convergence, margestats and likestats, latex tables, and option for shaded contours

- GetDist GUI options menu windows to easily change and immediately preview different analysis and plot settings; option to change the default style module (getdist.plots or planckStyle provided)

- GetDist GUI Script Preview tab for WYSIWYG plot preview of generated and custom scripts

- GetDist available separately as a pip-installable python package

- Reorganized python code into modules (paramgrid, getdist and getdist.gui)

- Plot scripts and GUI can now compare named chains from multiple grids/directories (chain_dir=[dir1, dir2...]; note chain names must be distinct)

- GetDistPlots now getdist.plots; added options to plot above and below diagonal in triangle plot; various fixes

- Grid scripts compatibility with Grid Engine (OGE/SGE, as installed e.g. on StarCluster Amazon images)

- Added project files for the CodeBlocks Fortran development environment (using CosmoMC Makefile)

- Changes for compatibility with gfortran from GCC 6 trunk (which fixes critical GCC Fortran 2003 bugs that affect GCC 5.1 and lower).

Updated development environments available with CosmoBox

- Fix for small inconsistency in how neutrino Neff was set in Calculator_PICO. Added warning if attempting to run with PICO when compiled with -openmp (fails for unknown reason; just remove openmp form Makefile and rebuild).

- Tidied up Makefiles, with auto-detection of ifort and gfortran (only gcc 6+ works)

- Support for Python 2.7 and Python 3.4+

February 2015

- Updated docs for analysing Planck 2015 chain results

- Added batch2/outputs/ sample scripts as used in Planck 2015 results

- Include old 2013 Planck cliklike.f90 for use of old likelihoods until new ones available.

January 2015

- Added BICEP-Keck-Planck likelihood (native CosmoMC; include batch2/BKPlanck.ini)

- New GetDist python version, updated plotted and graphical user interface:

- Run python/GetDistGUI.py (required PySide python module to be installed): make 1D, 2D, 3D plots, select parameters and chains, export PDF or script

- Pure-Python version of GetDist, python/GetDist.py (not all options supported yet)

- GetDistPlots can now generate densities on the fly from chains without running GetDist first (old method still supported)

- Many plotting generalizations, including new rectangle plots, customization of colours and lines, mixed filled/line contour legends, etc.

- MCSamples.py module for script access generalized getdist function: loading chains, getting margestats/limits, density estimates, adding new derived parameters, PCA, etc.

- CAMB updated to January 2015 version, with support for more transfer function options, sigma8 variations, halofit version selection, and new derived parameters

- Data Updates:

- New weak lensing module, using Weyl spectrum from CAMB, with various data cuts of the CFHTlens data (see Heymans et al.) (thanks Adam Moss)

- BAO module restructured, and supports a number of points with any of fsigma8, DA, H, Dv/rs, rs/DV, rs H, and with a correlation matrix

- Added RSD/BAO measurements from Samushia et al. and Beutler et al. (thanks Adam Moss)

- Added BAO from DR7 MGS (arXiv:1409.3242, thanks Lado Samushia), and 6DF updated to use rs/Dv.

- New Abundances module for Yp and D/H abundance measurement likelihoods

- Grid scripts:

- New optional script parameters to select more specifically in various ways

- deleteJobs.py and corresponding functions in jobqueue.py

- listGrid.py to view chain names in grid

- batchJob functions to resolve actual chain name given param and data tags (in any order)

- Matching of .covmat names using normalized name tags

- Numerous other small generalizations

- Simplified installation of grid chain download

- Importance sampling (action=1):

- Now support redo_from_text with redo_skip and redo_thin (no need for .data files)

- New redo_nochange option to calculate new likelihood chi2 derived parameters without affecting weights

- Bug fixes

- Updated bbn_consistency interpolation table from Parthenelope fits

- New default derived parameters, added if appropriate; .paramnames files moved into paramnames/ folder

July 2014

- Python job submission and queue scripts generalized for better portability and speed; can use environment variables and job script tokens to specify job submission defaults (job templates for specific machines may need to be updated; new sample template provided for MOAB scheduler)

- makeTables.py: new options including showing parameter shifts to a reference in sigma units

- makePlots.py: several new options for different cross-grid data comparisons and triangle plots

- makeGrid.py: various small new options and functions (make need to re-run makeGrid on existing grids to update)

- runbatch.py: option for combining several runs into one job (--runsPerJob)

- copyGridFiles.py: new script to easily copy chains or getdist results from part of a grid (copy to directory or directly into zip file)

- Fixed default ranges for JLA alpha parameter in batch1/JLA.ini. Updated JLA likelihood to allow JLA_marginalize=T for importance sampling with internal grid integration (as SNLS, but now non-square grid).

- Added DR8 BAO likelihood for completeness (thanks Hee-Jong Seo)

- Fixed bug in underlying bicubic interpolation (Interpolation.f90); caused crashes with simple mpk runs and possibly more general issues

- Fixed bug in production of .data files by importance sampling

- Added more helpful "-h" help info for some python scripts

May 2014

- HST module updated to read H0 value and error from .ini file (allowing easier modification/variation testing)

- Fixed bugs in writing out likelihoods for importance sampled chains, setting grid run importance sampling output directories, and writing 'derived' likelihoods for each chain position; other minor fixes

- python/makeTables.py has new option changes_adding_data to give shifts in parameter means (in sigma) between runs with and without given data

- python/makePlots.py has new option compare_alldata to make plots comparing all data combinations for each parameter combination

April 2014

- CAMB update to April 2014 version; cosmomc support added for running of running (nrunrun) and running tensor tilt (ntrun). Using inflation_consistency now uses second order consistency relations for nt and ntrun.

- Added tensor pivot parameter tensor_pivot_k to specify pivot scale for r independently of the scalar pivot scale. This uses the new CAMB tensor parameterization (tensor_parameterization=2) so that At = Pt(tensor_pivot_k) = r Ps(tensor_pivot_k) for any scalar pivot, tilt and running.

- Re-ordered derived parameters, and added new tensor derived parameters r0.01 (rBB), log(1010At) (logAT), and 109At (AT), 109 At e-2τ (ctlamp)

- Chains include "derived parameters" giving the χ2 [-2Ln(Likelihood)] for each likelihood used, and where more than one of a given type, the sum for each type (e.g. CMB, BAO).

- Added partial support for PICO calculator (cosmology_calculator = PICO in .ini file, test_pico.ini example provided); only CL and some parameters supported currently

- action=4 now outputs timings for each likelihood (only for first time run, so does not account for any caching); new test_output_root puts out the theory CL (+ any derived likelihood data) at the test point

- action = 1 (importance sampling) will now by default (redo_auto_likescale=T) automatically re-scale likelihoods to give O(1) weights if needed

- action = 1 (importance sampling) new has options redo_output_txt_theory, redo_output_txt_dir to output text files with theory CL and data point values for all points in the chain

- Parameter lmin_store_all_cmb to force output of all cl up to lmin_store_all_cmb even if not used by likelihoods

- Output derived parameter H(z) is now in standard km/s/Mpc units

- Bug fixes, including MPK fix for when only one redshift

- GetDist has new optional second parameter to specify file_root, e,g getdist distparams.ini chains/test

- GetDist chain_num can now either be unspecified or -1 in order to read as many chains as exist

- python/makeTables.py has new options delta_chisq_paramtag to specify reference parameter model for quoting Δχ2 to (default is baseline model), changes_from_datatag to give shifts in parameter means (in sigma) relative to the specified data tag at the same parameter combination, and changes_from_paramtag to give shifts relative to some baseline parameter combination

- python/batchJob.py has new convenience jobGroup class for optional use in grid setting files; dataSet class has add method to modifying existing content

- Python 3d plots now set hard bounds automatically (as 2d)

March 2014

- Major restructuring of main code using Fortran 2008: structure supports additional cosmology calculator codes, etc. Use ifort 14+.

- Re-written CMB likelihoods; new CMBlikes module replaces the (mis-named) Planck_like, with support for exact forecasting and binned HL likelihoods (e.g. BICEP2); runtime determination of L-ranges and fields needed. (.data format changed); old CMB data format support removed for now.

- Added BICEP2 data likelihood files (in ./data/BICEP/)

- New JLA supernovae likelihood module (arXiv:1401.4064)

- Added DR11 BAO module changes and data from SDSS

- Mpk functions now use 2D bicupic interpolation (thanks Jason Dossett)

- Added sterile_mphys_max parameter to set prior on maximum physical mass (in eV) of a thermal massive sterile neutrino, 10eV by default.

- MatterPowerAt_Z interpolation bug fix

- .likestats files include mean likelihood numbers; python tables updated accordingly

- python/runMPI.py added to replace perl scripts; based on a templated queue submission script, e.g. provided job_script.

- python makeGrid settings: lists of .ini files can now be, or include, python dictionaries of parameters rather than .ini files; job data_set can be a dataSet() item

- CAMB update: updated halofit fitting parameters for neutrino models (thanks Simeon Bird); DoTensorNeutrinos is now on by default.

December 2013

- Updated BBN module, using interpolation table (from AlterBBN) with updated neutron decay constant and converting from BBN to total mass conventions for YP. Uses new Interpolation module with reusable 2D bicubic interpolation class. Changes in YP are O(1%) and hence have only tiny effect on other parameters.

- Fixed bugs when using LSS, including one giving wrong sigma8 (thanks Matteo Costanzi)

- CAMB updated to December 2013 version (minor changes)

November 2013

- CAMB updated to November 2013 version (fixes problem with e.g. tensor runs)

- Python grid scripts bug fixes and minor new options

October 2013

- CAMB updated to October 2013 version (inc. Jason Dossett changes to support non-linear lensing and non-linear MPK, changing numerical results slightly; fix for ppf bug that significantly changes results at low L for w>>-0.8, thanks David Rapetti and Matteo Cataneo)

- Added WiggleZ and MPK modules, and corresponding general changes for likelihoods using the linear or non-linear matter power spectrum as a function of redshift. Double interpolation is now avoided, and combinations of requirements for different likelihoods are automatically internally combined. (All thanks to Jason Dossett)

- Added halofit_ppf (Jason Dossett)

- Minimization now has more options, including high-temperature MCMC check, and uses existing .covmat for widths: best fits seems to be a lot more stable now; also MPI support to compare result from multiple minimizations (not usually required)

- Added checkConflicts to likelihood class (so new likelihoods can check full list for conflicts and abort if necessary e.g. BAO/MPK) and added .dataset file-based likelihood ancestor class, with conflict specification input parameters

- New python plotting options and Planck default settings; sample Planck output scripts updated

June 2013

- Fix getdist bounds outputs when plot_params is set and triangle plot data generation

- Fix for cosmomc runs with no covmat; more robust checkpoint file output

April 2013

- [23 April] fix to work with default test_likelihood parameter (F)

- Automatic thinning by oversample_fast factor for sampling_method=1 (prevents files getting very large)

- oversample_fast meaning slightly changed to give more regular fast updates in standard sampling, and generate oversample_fast standard fast metropolis samples per slow update in the dragging method.

- Tweaks to various internal convergence and update parameters

- GetDist option can be set to m for old matlab outputs or py for similar python plot outputs

- Added getdist parameter converge_test_limit to set limit for convergence tests

- Fixes for runGridGetdist.py, and default to producing .py python plot files in each dist folder rather than matlab .m.

- Renamed matlab_subplot_xx etc to subplot_xxx

- Various other minor bug fixes, tidied up MCMC.f90 code (for the moment removing old un-updated sampling methods)

March 2013

- Sampling

- New fast-slow sampling_method=7, using new fast/slow blocked decorrelation scheme (paper) and fast dragging following Neal 2005. This is recommended for Planck.

- fast_param_index specifies that all parameters with number greater or equal to fast_param_index should be treated as fast. Can alternatively use fast_parameters to set specific parameter to be treated as fast.

- dragging_steps determines the number of fast steps per slow step. In total dragging_steps x num_fast metropolis steps are taken in the fast direction for each slow step. Does not need to be an integer, 2-3 works well for Planck.

- for fine-tuning block_semi_fast boolean defines whether initial power spectrum parameters are treated as separate blocks, block_fast_likelihood_params determines if fast parameters are blocked depending on likelihood dependencies. Both true (default) is usually fastest.

- Grids of models and python utilities

- A new suite of python scripts for running CosmoMC for grids of models, running GetDist over the result, management, analysis and plotting. See the python scripts readme

- Structure

- Restructuring using Fortran 2003 features (probably does not compile on gfortran, ifort 13 OK); likelihoods and theory now only re-computed as required as parameters change (enables the fast-slow sampling method to work). Each likelihood specifies its own theory and nuisance parameter requirements.

- Data likelihoods now specify their own .paramnames file for any nuisance parameters (samples in ./data). Combining theory and data parameters is done internally, there's no need to change hard-coded parameter numbers when new nuisance parameters are added, and unused likelihood nuisance parameter are no longer output. Each likelihood function is passed the array of its nuisance parameters, completely independent of what other likelihoods are doing (but multiple likelihood functions can use the same nuisance parameter names when they are the same parameter). Likelihoods specify their speed (<0 is slow, >=0 is fast, with higher faster), and are internally sorted to be in speed order before use.

- Reorganized parameterization class and module implementation; can now set H0_min and H0_max as .ini input parameters. Added parameterization=background option (e.g. for supernovae-only runs): parameter numbers can now be changed dynamically without re-compiling.

- CAMB and cosmology

- CAMB (and CosmoMC) updates fix issues with larger neutrino masses and non-linear calculation in very closed models (Mar 2013 version)

- Added support for massive neutrinos with varying n_eff (nnu), treating massive as nearly thermal and the rest as massless (for n>3.046), or reducing the temperature of the massive neutrinos for n<3.046.

- Added use_lensing_potential and use_nonlinear_lensing (currently can't be used with any LSS data; also note that it is not calculated consistency when only the initial power spectra are changed). Changed default num_cls_ext=1.

Lensing potential accuracy increased for use_lensing_potential=T.

- Derived parameters now include r_s/D_v(0.57)

- T_CMB changed to 2.7255

- lmax_computed_cl to define CAMB maximum L; higher L are approximated by scaling fixed template file (highL_theory_cl_template), for use where non-primordial contributions dominate.

- Input parameter num_massive_neutrinos for use with the mnu parameter (e.g. can fix to 0.06 with num_massive_neutrinos=1 to approximate minimal hierarchy)

- Links by default with CAMB simplified ppf module for varying w (parameter wa).

- Base parameters changed to use mnu rather than fnu; several new chain and derived parameters supported and output by default.

- GetDist

- parameter credible_interval_threshold to determine when credible intervals or tail integrals are used

- .margestats has new format, with separate limit type for each confidence

- Prior ranges set automatically for MCMC parameters from .ranges file

- applies 1D smoothing scale relative to standard deviation, or semi-optimal: smooth_scale_1D

- 2D plots use adaptive elliptical kernel density, smooth_scale_2D sets smoothing scale relative to posterior width or bin size.

- Removed sm support. Added python/GetDistPlots.py with fairly general plotting support.

- R-1 statistics now use all samples left after cutting ignore_rows. The value is redefined more uniquely to be the worst eigenvalue of the covariance of the chain means of mean-covariance-orthonormalized parameters

- Change for making large arrays of 1D plots not too small; matlab_subplot_size_inch, matlab_plot_output parameters

- Likelihoods

- Support for the Planck likelihood

- Added SNLS supernovae (with option for internal marginalization) and updated Union to Union 2.1

- BAO data up dated to BOSS DR9 final results (DR7, DR9 and 6DF). Note the code is not identical, cosmomc computes the drag sound horizon numerically (recalibrated to be consistent in the fiducial model); also be aware of correlation with Wigglez.

- HST data updated to Riess et al: 1103.2976 (from BOSS mod)

- Updated for WMAP9 (edit the Makefile in your wmap_likelihood_v5 directory appropriately first).

- Input parameters use_WMAP_TT_beam_ptsrc, use_WMAP_TE, use_WMAP_TT that can all be set to false to use only WMAP low L

- added prior[xxx]=mean std inputs to specific Gaussian priors on parameters

- removed Use_BBN, use_mpk and others that are currently not updated

- a large number of old files in data/ have been deleted

- Importance sampling

- Added redo_like_name for importance sampling when only one likelihood is changed

- Added redo_no_new_data option to redo only a specific likelihood when importance sampling

- Added redo_add to only calculate changes due to new set of likelihoods (without re-computing old ones)

- .data format for importance sampling re-organised; can now be re-used if unused parameter ordering and numbering changes

- Minimization

- Minimizer uses fast and slow subspaces for speed and sanity check on convergence

- Likelihood/chi-square values for the separate data sets tracked separately (as well as total), and output in .minimum file

- start_at_bestfit = T option to start chains at best fit point (if true, be careful with convergence since non-dispersed)

- Output .bestfit_cl files with best-fit C_L from action=2 runs. .minimum files now include latex labels (from .paramnames)

- action=3 to find best-fit and hessian (if it works!), and then stop; output .hessian.covmat file and best-fit

- Output chains .txt files only include parameters that are actually varied (specified in .paramnames file; .ranges file specifies all parameters with ranges, including fixed parameters)

- Output .initparams file for each chain giving input parameters used

- Can be compiled in single or double precision (double is new default)

- Added many Planck .covmat files and common/batch input parameter set .ini files.

- Numerous other things

October 2012

- New best-fit finding routine (action=2) uses Powell's 2009 BOBYQA routine (faster and reliable with bounded

parameters). Best-fit parameter values written out including derived parameters.

- estimate_propose_matrix outputs file in named parameter .covmat compatible format and copes better with parameters with hard prior cuts

- CAMB October 2012 update: tweaked recfast model and support for compiling CosmoMC with CosmoRec and HyRec recombination codes; updated halofit model

- .ini files support reading in file of default parameter values that can then be overridden (greatly reduce duplication between similar runs), e.g. DEFAULT(baseparameter_defaults.ini); can be used in nested way, and with INCLUDE.

January 2012

- Fixed generation of .paramnames file when using post processing (importance sampling)

- CAMB update for January 2012 (~1e-3 improvement in interpolation error, slightly faster for high accuracy); see arXiv:1201.3654

- Added compare_means.m matlab script to graphically compare differences between posterior means from two or more .margestats files with the same parameters (like this)

- Increased precision in GetDist's .margestat files

- Included a couple of extra Planck-like .covmat files for non-flat models and massive neutrinos

October 2011

- New BAO multiple-dataset module including Wigglez (thanks to Jason Dosset)

- stop_on_error parameter, if F global_error_flag>0 from CAMB results in a rejected point rather than stop

- Fix to setting fractional number of neutrinos since July CAMB version (thanks to Zhiqi Huang)

- CAMB Oct 2011 update: high_accuracy_default precision in non-flat models, minor tweaks, and error flag

- Makefile updates

August 2011

- Changes to params_CMB.f90 for gfortran 4.6 compatibility

July 2011

- Updated matlab colormaps in mscripts directory; added color_for_bw.m (yellow-blue/black, which should look consistent printing in B&W).

- all_l_exact likelihood now uses fsky rather than fsky2 scaling at all L

- GetDist changes to use more IO wrapper functions

- %DATASETDIR% and %LOCALDIR% placeholder support for all input file names; custom_params input parameter to read and store additional parameter file in CustomParams object (settings.f90), also used as optional additional placeholders.

- .ini files now support INCLUDE(filename) to share common parameters between files. INCLUDES can be nested.

- MPI job now finishes neatly (not aborted) if convergence criterion is achieved

- num_cls now set to 4 by default (include BB). C_l .data files now store every L by default

- CAMB updated to July 2011 version; new high_accuracy_default input parameter to target 0.1%-accuracy on small scales

- Added directory of generic python scripts; simple example to make fake perfect CMB dataset with given beam and noise

- Cosmologui compatibility

May 2010

- Sets helium abundance using BBN consistency ignoring very small error bar (bbn.f90 thanks to Jan Hamann). Set bbn_consistency=F to fix to old default value of 0.24.

- Added nnu (effective number of neutrinos) and YHe (helium fraction) to cmbtypes.f90; now easier to make these varying parameters [by default nnu is fixed to 3.046 and YHe set from BBN consistency)

- Updated supernovae.f90 to Union 2 (Nao Suzuki)

- Updates to BAO for more general acoustic scale calculation (thanks to Jan Hamann and Beth Reid)

- Covariance matrices (.covmat) now only include varied parameters and have a header giving names of parameters used (so much easier to reuse if parameters are re-ordered or other parameters added)

- Aded local_dir parameter to change default location for .covmat and .paramname files

- New GetDist options

- line_labels=T to write out legend of roots being plotted (matlab)

- finish_run_command to run system command when getdist finished (e.g. "matlab < %ROOTNAME%.m" to make 1D plot)

- no_triangle_axis_labels to suppress axis tick labels (except on edges) when making large triangle plots

- Makefile updated for simpler MKL linking with ifort version 11.1+

Jan 2010

- Updated to use WMAP 7-year likelihood

- Updated CAMB to Jan 2010 version - main change is use of RECFAST 1.5 (up to 2% change in Cl at l=2000)

- Added ParamNamesFile optional input parameter for cosmomc

- Added basic support for CMB lensing reconstruction power spectrum, and full-sky implementation in Planck_like.

October 2009

- [9th Nov] changed use_bao to use_BAO in the sample .ini file

- [27th Oct] fixed GetDist bug in credible intervals with prior cutoffs (Jan Hammann); fixed all_l_exact

- [27th Oct] fixed problems with .newdat files, and sometime-problem in LRG likelihood; updated ACBAR and BICEP dataset; COSMOS computer detected by Makefile

- Support for naming parameters to simplify changing number of parameters and parameterization. Transfer of names and labels between cosmomc chains and getdist via .paramnames files (should be backwards compatible with old .ini files). Reference indexed .ini parameters by name, e.g. param[omegambh2] as alias for param1. Give getdist plot parameters

as name lists (e.g. H0 omegam tau).

- Added SDSS LRG dataset (thanks to Beth Reid; many changes to mpk.f90; to use must have EXTDATA = LRG in the Makefile)

- supernovae.f90 now replaced by default with SDSS Supernovae compilation (previous code now supernovae_Union.f90; thanks to Wessel Valkenburg); config in data/supernovae.dataset.

- Added simple baryon oscillation option (thanks to Beth Reid)

- Added Quad pipeline 1 dataset (QUAD_pipeline1_2009.newdat). Support for QUAD-format .newdat files.

- Changed HST data to use the latest result (HST.f90; thanks to Beth Reid).

- Added optional data_dir input parameter to use different directory than ./data; .newdat data files read window functions from relative path location.

- Updated CAMB to Feb 2009 version (fixed issue with lensed non-flat models) + change to halofit to include w/=-1 in the background (Beth Reid)

- New IO wrapper module to simplify pipelining.

- Generalized ASZ internally to allow num_freq_params parameters governing frequency-dependent part of CMB signal (default in settings.f90 set to one, ASZ as before - an SZ template amplitude).

- Fixed buffer overflow with CMB lensing and AccuracyLevel > 1.

- Only one source Makefile supplied, and now builds CAMB automatically; if WMAP variable is unset builds without WMAP

June 2008

Fixed problem initializing nuisance parameters. Updated CAMB to June 2008 version (fix for very closed models).

May 2008

supernovae.f90 now replaced by default with UNION Supernovae Ia dataset (previous code now supernovae_ReissSNLS.f90; thanks to Anze Slosar).

Additions to Planck_like module; support for sampling and hence marginalizing over data nuisance parameters, point sources, beam uncertainty modes.

New GetDist option single_column_chain_files to support WMAP 5-year format chains (thanks to Mike Nolta): 1col_distparams.ini is a supplied sample input file. New GetDist option do_minimal_1d_intervals to calculate equal-likelihood 1-D limits (see 0705.0440, thanks to Jan Hamann). New GetDist option num_contours to produce more than two sets of limits.

April 2008

Includes latest CAMB version with new reionization parameterization - default now assumes first ionization of helium happened at the same time as hydrogen, and z_re is defined as the point where xe is half its maximum (the optical depth and z_re are related in a way independent of the speed of the transition in the new parameterization). This changes the z_re numbers at the ~6% level. Fixed bug reading in mpk parameters.

March 2008

Uses WMAP 5-year likelihood code. Added cmb_dataset_SZx and cmb_dataset_SZ_scalex parameters to specify (parameter independent) SZ template for each CMB dataset (WMAP_SZ_VBand.dat included from LAMBDA). Parameter 13 is now ASZ - the scaling of all the SZ templates, as used in WMAP3/WMAP5 papers. Updated supplied covariance params_CMB.covmat for WMAP5. Minor compatibility changes.

February 2008

Added generic_mcmc in settings.f90 to easily use CosmoMC as generic sampling program without calling CAMB etc (write GenericLikelihoodFunction in calclike.f90 and use Makefile_nowmap). Added latest ACBAR dataset. CAMB update (including RECFAST 1.4). New Planck_like.f90 module for Cl likelihoods using approximation of arXiv:0801.0554 (also basic low-l likelihood). Added markerx GetDist parameters for adding vertical lines to parameter x in 1D Matlab plots. Various minor changes/compatibility fixes.

November 2006

Updated CBI data. Compiler compatibility tweaks. Fixed error msg in mpk.f90. Minor CAMB update. Better error reporting in conjgrad_wrapper (thanks to Sam Leach).

October 2006

(20th October 2006)Fixed k-scaling for SDSS LRG likelihood in mpk.f90. Changes for new version of WMAP likelihood code. Added out_dir and plot_data_dir options for GetDist. Minor compatibility fixes.

Added support for SDSS LRG data (astro-ph/0608632; thanks to Licia Verde, Hiranya Peiris and Max Tegmark). CAMB fixes and other minor changes.

August 2006

Improved speed of GetDist 2D plot generation, added limitsxxx support when smoothing = F. Added sampling_method = 5,6, preliminary implementations of multicanonical and Wang-Landau sampling for nasty (e.g. multi-modal) distributions (currently does not compute Evidence, just generates weighted samples). Changed matter_power_minkh (cmbtypes) to work around potential rounding errors on some computers. Updated CAMB following August 2006 version. Added warning about missing limitsxx parameters to getdist. Added MPI_Max_R_ProposeUpdateNew parameter (when varying parameters that are fixed in covmat). Updated CBI data files.

May 2006

Supernovae.f90 updated to use SNLS by default, edit to use Riess Gold. 2dF updated (twodf.f90 file deleted, use 2df_2005.dataset); covariance matrix support in mpk.f90.